26: My Thoughts on ARM + Nvidia, TikTok's Non-Sale, Snowflake IPO, Amazon's JOBS JOBS JOBS, and Vinyl Sales > CD Sales

"But first, napkin math..."

"The truth is whatever you can get away with."

"No, that’s journalism. The truth is whatever you can’t escape."

-Greg Egan, Distress

Sorry to those who were looking forward to the Mark Leonard interview mentioned in edition #25.

The link was live at the moment when I posted the letter, and 15-20 minutes later I started getting messages that it didn’t work. Looks like it was taken down soon after the email went out (and unfortunately, I can’t edit emails after they go out — but I updated the website version). I don’t know what happened to make the podcast disappear, but I have a few guesses…

❄️ By the time you read this, Snowflake should have IPO’ed (I wrote about their S-1 and liked this good deep-dive). Impressive to think that less than a year ago, it raised money as a private company around $12bn, and now it’s about to go public at more than $33bn (after the original IPO pricing was upped twice from $80 midpoint to $120). Talk about an enthusiastic Mr. Market!

Series G-2020 val. $12B, ~$38 pps

Series F-2018 val. $4B, ~$15 pps

Series E-2018 val. $1.8B, $7.46 pps

Series D-2017 val. $610M, $3.50 pps

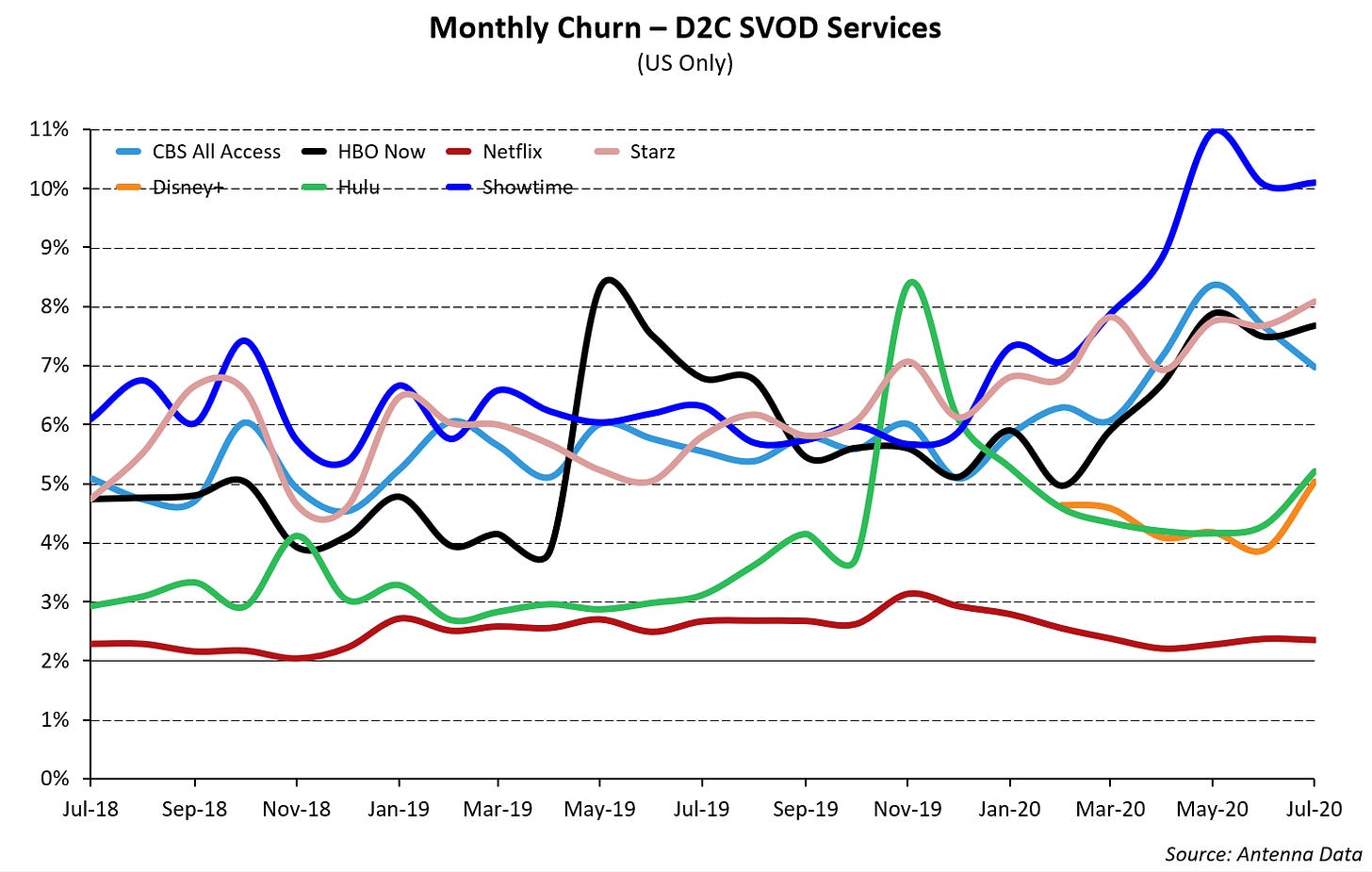

Series C-2015 val. $330MInvesting & Business‘Streaming Wars: Churn Edition’

Via Matthew Ball

My Notes on ARM + Nvidia

Here are some of my highlights from the call that Nvidia had about their purchase of ARM Holdings from Softbank for $40bn in cash and stock (Jensen tried to be cute in his letter about the deal, starting: “We are joining arms with Arm”).

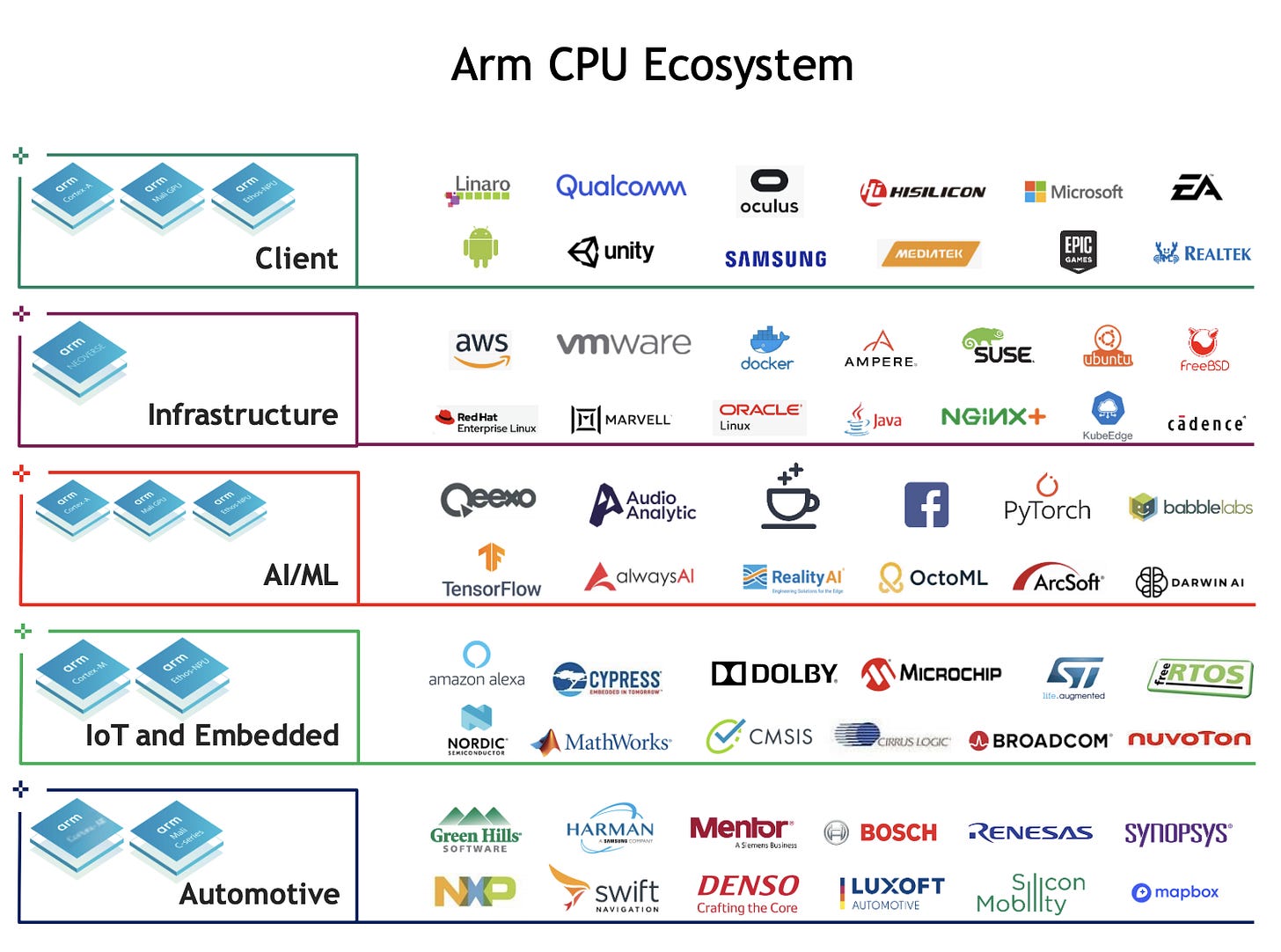

But first, napkin math based on the numbers of ARM chips sold last year (22.8bn) and ARM’s reported revenue ($1.8bn) shows about 8 cents per chip going to ARM. That may seem low if you mostly think of ARM chips as smartphones CPUs/SoCs, but the company does a lot more than that, as you can see in the “ecosystem” graph above. A bunch of tiny ARM chips are going into all kinds of stuff, and many are not very powerful, and very cheap, hence the low average revenue per chip.

On to the call, Jensen said:

Arm's customers have shipped 180 billion chips based on its CPU. And its technology is used by 70% of the world's population. [...]

we have also used Arm's technology for years, including our SoC for Nintendo switch console, our self-driving car computer, the new Mellanox Bluefield Smart Nick and our Jetsen robotics computer. [...]

By combining the world's most popular CPU with NVIDIA's AI computing platform, we're creating the leading computing company for the age of

AI.

It’s been quite clear for a bit that Jensen sees the future as being eaten by AI, and with the compute unit getting progressively larger and disaggregated, up to what is now in his view the data-center level.

This explains all this focus in positioning Nvidia for these realities, with a big hardware and software focus on AI, and with data-center computing (including all the east-west data that needs to flow through, you guessed it, the kind of products that Mellanox makes).

This rise of AI partly comes from improving algorithms and increasing compute power available, but also because there’s a lot more data being produced on which AI techniques can be used:

Today, the Internet connects billions of people to giant cloud data centers. In the future, trillions of devices will be connected to millions of data centers, creating a new Internet of Things that is thousands of times bigger than today's Internet of people from smart retail to manufacturing and service robots, self-driving cars to smart streets and cities.

The software powering this new Internet will not be written by humans, but by computers, learning from data [...]

Back to the data-center:

in the data center, NVIDIA will turbocharge Arm's R&D and meet cloud computing customers demand for a higher velocity… ARM server CPU road map [...]

We'll offer Arm customers access to NVIDIA's AI and GPU IP. We will boost Arm's BAS software ecosystem with NVIDIA's AI and accelerated computing platform. And we will off cloud data center customers, the broader computer industry, a stronger server CPU road map

They’re keeping the open license model and the UK base. Saying it increases their TAM to $250 billion, “from computer graphics, smartphones and other devices, data centers, automotive, edge and IoT”.

The CFO:

We expect the acquisition to close in approximately 18 months [...]

Arm has a great business model with very high-margin reoccurring revenues. In the trailing 12 months ended March, unaudited pro forma IFRS revenue was $1.8 billion, with 94% gross margins, and adjusted pro forma EBITDA margin of approximately 35%.

Note, this does not include the IoT services group, which is not part of this transaction.

Money deets: $21.5 billion in common stock and $12 billion in cash, $5 billion in earn-out of cash or stock-based on Arm financial performance targets, $1.5 billion in equity to Arm employees per post-closing retention.

Moving on to the Q&A, this answer by Jensen reiterates his vision for the future of computing in the data-center:

because of the diverse workloads and because of the intense amount of computation and the data processing that has to happen because no computer stands alone anymore. And the type of application it runs like artificial intelligence required acceleration, but the architecture that's the most sensible that the industry is gathering around is a multi-processor or a multi-type of processor, what is otherwise known as heterogeneous type of a computing architecture.

The CPU is fantastic at low latency, single threaded, really, really predictable latency type of processing. The GPU is really fantastic at high throughput data processing. And the DPU is really good at network and serial data movements, security processing.

These 3 types of processors will define the future of computing. It is exactly the reason why we're so excited about this acquisition that with Arm and NVIDIA and Mellanox, we have 3 computing platforms, one for networking, one for high throughput computing, accelerated computing and AI computing, and one for I CPUs and single-threaded computing

Answering a question about how Nvidia can be successful with ARM server CPUs:

There are 3 ways that we will turbocharge the R&D of our server CPU road map.

The first is NVIDIA's R&D capacity. Our R&D platform is much larger than Arm's [...]The second is the infrastructure that is required to turn a CPU core into a data center server platform. It includes the SoC, of course, the rest of the system logic on the motherboard. The rest of the system, including the GPU, the DPU, the system software, all of the algorithms on top, the various system stacks necessary for the various main applications, whether it's virtualized environments or high- performance computing environments or containerized orchestrated type of environments, micro services type of environments. [...] for the very first time, we'll be able to create a a choice for the data center world, that is absolutely world-class that is complete… fully integrated, fully developed and optimized [...]

lastly, there are many things that we're developing, both of us that are developing in our future road maps that we can now harmonize. And so the -- just the existing engineering we already have in our company when we put them target them to bear focus on to bear on Arm CPU server strategy will accelerate tremendously

This level of integration between the various components is what has been missing so far with ARM server CPUs, according to Jensen. The x86 ecosystem is very mature on that front, and Nvidia’s bet is that they now have enough control over all the pieces of the puzzle to create that for ARM server stacks.

On the scale of the ecosystems at play:

NVIDIA sells something along the lines of, call it, 100 million chips a year. But last year, Arm sold in 22 billion chips. We believe all of those chips in the future are going to include AI computing. We believe all of those chips in the future will be accelerated computing. And we don't have to build all of them. We would love to extend our architecture to all of them. [...]

Through the Arm network and ecosystem and this incredible support system they've created over the last 30 years and the reputation for supporting IP that they've created, we're going to be able to take our technology through that. That is incredibly exciting to me

So that’s a big part of the grand vision for the strategic fit between the companies. They’re seeing that 2+2=5 in this case.

On the importance of owning this power-efficient platform going into the AI and edge computing/IoT era:

The Arm CPU is so energy efficient. And the NVIDIA GPU is so energy-efficient that we could increase the adoption of accelerated computing [...] we can now build very sophisticated AI chips in just a few -- in just a few 100 milliwatts. And that fits into a very large number of computing devices. [...]

as the world leader in AI computing and Arm the most popular CPU and the largest ecosystem in the world, we should be able to connect these 2 things together

Here we get a view inside the margin profile of the IoT group that wasn’t part of the deal:

Q: You said adjusted EBITDA margins of 35%. SoftBank's on recent presentation suggested EBITDA margins below 15%

A: we are just buying the IPG group and leaving the IoT data science group on that. So that is the most of the difference between the two.

Why did Nvidia want to buy ARM, since they already had a license to develop their own ARM chips?

There are 3 reasons why that we should buy this company, and we should buy it as soon as we can.

And the reason for that, number one, is this. As you know, we would love to take NVIDIA's IP through Arm's network.

Unless we were one company, I think the ability for us to do that and to do that with all of our might is very challenging. And so I don't take other people's products through my channel. I don't expose my ecosystem to other companies' products. The ecosystem is hard earned. It took 30 years for them to get here. [...]

Number two, we would like to lean in very hard into the Arm CPU, data center platform. There's a fundamental difference between a data center CPU core and a data center CPU chip and a data center CPU platform, a data center computing platform. [...]

Third reason, we want to go invent the future of cloud to edge. The future of computing where all these autonomous systems are powered by AI and powered by accelerated computing and all the things that we've been talking about, that future is being the we speak, and there are so many great opportunities there.

Edge data centers, 5G edge data centers, autonomous machines of all sizes and shapes, autonomous factories, we -- NVIDIA has built a lot of software, as you guys have seen, metropolis, Clara, Isaac, Drive, Jarvis, Aerial, all of these platforms are built on top of Arm.

And finally, why is power-efficiency so important in the data center (it’s fairly obvious why on mobile, battery powered devices, but some may not realize just how important it is also for DCs):

Arm is the most energy-efficient architecture in the world. And energy efficiency in a power limited data center, which is always power limited. Whatever size data center you see, there's a limit to the power that it provisions. The more energy efficiency, the greater number of processors you could fit inside, the more throughput or the more customers you could serve.

So whether you want to think about it as in dollars per compute or the dollars you can make per compute with your data center, either way the Arm energy efficiency is extraordinary. That is exactly the reason why Amazon is so excited about Graviton 1 and Graviton 2. And you're going to see many others that want to follow suit.

Something I pointed out in edition #3, and that is worth repeating here, because it’s fun trivia:

Fun fact: Apple was one of the founding partners of ARM, along with Acorn and VLSI Technology in 1990. Story from the LA Times from back in the day.

(I know the company name is not supposed to be capitalized, but I think it looks stupid otherwise — and anyway it was originally an acronym-with-an-acronym-inside for "Acorn RISC Machine", which, if you decompile it fully, would be: “Acorn Reduced Instruction Set Computer Machine”, or ARISCM, which kind of sounds retro-futuristic sci-fi badass)

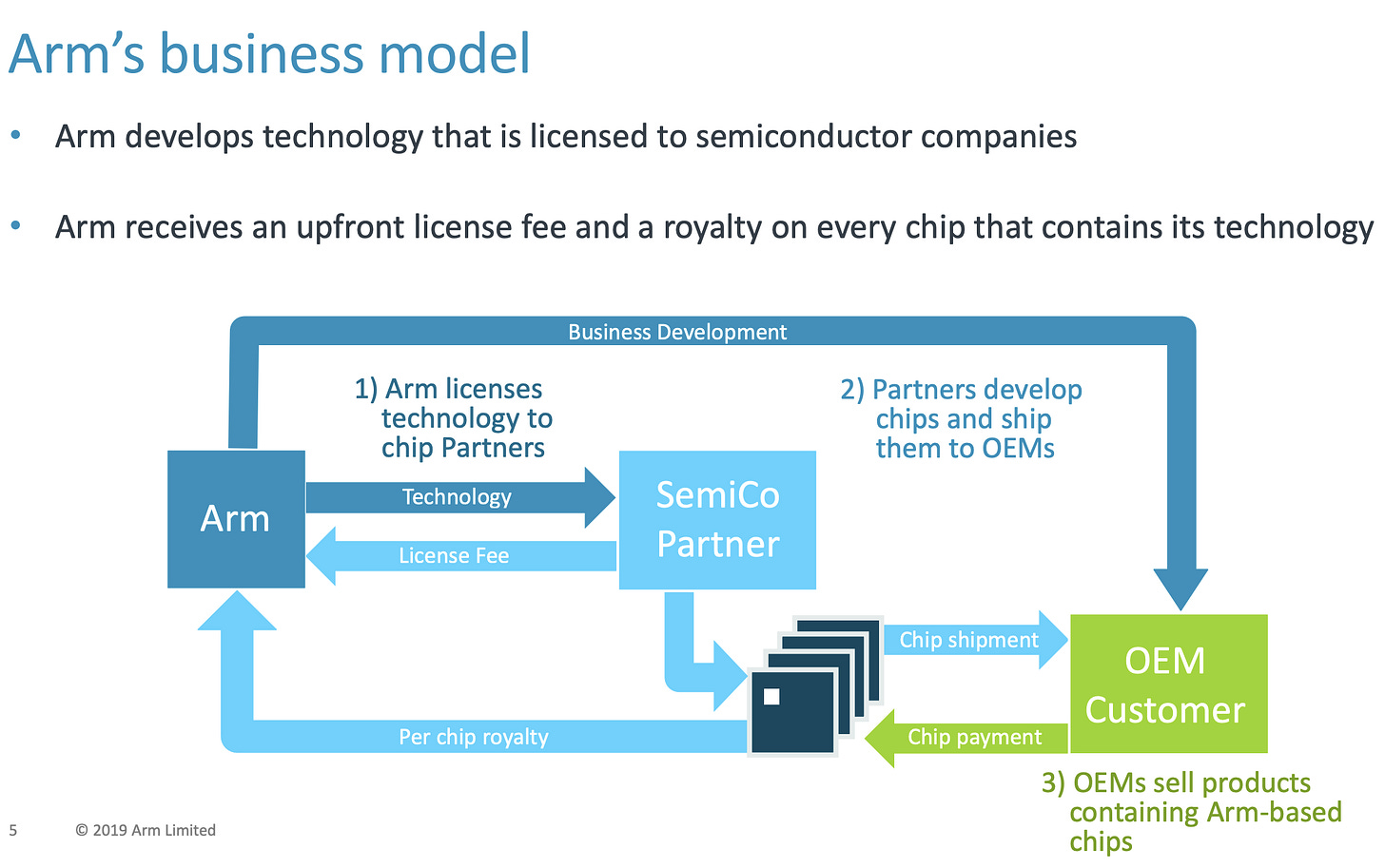

I’ll leave you on this flow chart, which highlights the fact that ARM doesn’t have the most simple business model, but it’s effective at getting their IP to be built into products at a scale that no company alone could do:

Update: After having written all this, Ben Thompson published a public post on Nvidia & ARM, which is a pretty skeptical take on Nvidia’s ability to maintain ARM’s neutrality.

TikTok’s Non-Sale

ByteDance will place TikTok’s global business in a new US-headquartered company with Oracle investing as a minority shareholder [...]

Oracle will have a stake in the whole of TikTok and not just the US operations, while ByteDance, the Chinese group that owns the app used by millions of teenagers, will be the majority shareholder of the new entity. (Source)

So basically, it wasn't a sale, Trump caved on what he had demanded "for national security reasons", and he gave fundraising buddy Ellison a bunch of free money. This is normal…

YouTube Launching its TikTok Clone (In India)

Right on cue… And in fact, maybe a little late, Google is launching a beta of its TikTok clone, YouTube Shorts:

Over the next few days in India, we’re launching an early beta of Shorts with a handful of new creation tools to test this out. This is an early version of the product, but we're releasing it now to bring you — our global community of users, creators and artists — on our journey with us as we build and improve Shorts. We’ll continue to add more features and expand to more countries in the coming months as we learn from you and listen to your feedback.

Vinyl Surpasses CD Sales For The First Time Since 1986

The RIAA (an acronym burned into the memory of anyone old enough to have been around during the Napster era) released its mid-year numbers, and they show vinyl surpassing (significantly) CDs in the “physical sales” category, a first since the mid 1980s:

Revenues from physical products of $376 million at estimated retail value for first half 2020 were down 23% year-over-year. There was a significant impact from music retail and venue shutdown measures around Covid-19, as Q1 2020 declines were significantly less than Q2 compared with their respective periods the year prior. Revenues from vinyl albums increased in Q1, but decreased in Q2, resulting in a net overall increase of 4% for 1H 2020. Vinyl album revenues of $232 million were 62 of total physical revenues, marking the first time vinyl exceeded CDs for such a period since the 1980’s, though it still only accounted for 4% of total music recorded music revenues.

In the first half of the year, streaming accounted for 85% of revenues (they include in that the usual suspects, but also “Pandora, SiriusXM, and other Internet radio” and “and ad-supported on-demand streaming services (such as YouTube, Vevo, and ad-supported Spotify).”). 📀

Amazon: JOBS JOBS JOBS (part 2)

Amazon.com Inc. is hiring 100,000 full and part-time employees across the U.S. and Canada, offering starting wages of at least $15 an hour, the latest announcement in the Seattle-based e-commerce giant’s hiring spree.

The new jobs include benefits and sign-on bonuses of as much as $1,000 in select cities and access to training programs, the company said in a statement on Monday. This is in addition to the 33,000 corporate and technology employees the Seattle-based e-commerce giant announced last week, it said. (Source)

This is in addition to the 175k people that Amazon had hired during the Spring to deal with the pandemic-surge in demand (about 125k were kept in permanent positions).

At the same time, Amazon is building out its fleet of airplanes:

Between May and July, Amazon added nine planes to its air fleet, “the most it has added over a three-month span since its inception,” according to a recent study.

The company has grown its air cargo fleet to about 70 planes, up from a total of 50 last February. (Source)

Interview: Michael Seibel (Y Combinator CEO, Co-Founder Justin.tv/Twitch)

Great interview of Michael Seibel by Patrick O’Shaughnessy, mostly about the thousands of startups he’s seen and what it takes to build a business from zero. Worth listening in full (and I won’t give highlights here because this letter is getting long).

The story that Michael tells about the PromisePay around the middle of the interview is worth the price of admission alone. Great stuff.

⚡️ Pearl of Wisdom

Science & Technology

Ambiq Apollo4 Super-Power Efficient System-on-a-Chip

I wrote a lot about power-efficiency in the Nvidia section of this letter… There’s a startup called Ambiq Micro that is designing extremely efficient chips, which can be used for wearables and other IoT/embedded uses.

The goal here is power-efficiency, not raw speed, so clearly tradeoffs are made. But it’s still pretty cool:

The Apollo4 system-on-a-chip is based on the Arm Holdings Ltd. Cortex M4 processor and achieves a power efficiency of three microamperes and 10 microwatts per megahertz with low deep sleep current modes. A microampere and a microwatt are one-millionth of an ampere and a watt, respectively. At those power levels, the chip can still support clock speeds of up to 192 megahertz, 2D and 2.5D graphics acceleration to power displays up of to 640X480 resolution with 32-bit color as well as integrated Bluetooth low-energy communication at 2 megabits per second.

Ambiq claims the Apollo4’s power usage is one-tenth the industry and average and twice the efficiency of the company’s previous processor line. [...]

Founded in 2010, Ambiq’s processors are now in use in more than 100 million devices ranging from smart watches to home health monitors. (Source)

It’s fabbed on TSMC’s 22nm ULL process and based on a 32-bit Arm Cortex-M4 processor with FPU and Arm Artisan physical IP. How power-efficient would it be if they could afford to fab it on TSMC’s most cutting edge node? But then, it may make the chip too expensive for use cases, so here again, life is trade-offs.

West Coast’s Orange Skies Getting White-Balanced

The U.S. West Coast is burning, and people have been taking photos that look right out of the Las Vegas section of Blade Runner 2049. But many have had trouble getting photos that show what the human eye sees because phone cameras will automatically try to do white-balancing, and being in a completely orange world isn’t something that was tested against when they camera software was designed.

So many have downloaded third party “pro” camera apps like Halide to take “manual mode” photos without the auto-white-balance. The people at Halide are such good people that they did this, which I thought was worth highlighting:

Mensch move. Kudos.

Google Now Retroactively Carbon-Neutral

Sundar Pinchai, Google CEO, wrote:

As of today, we have eliminated Google’s entire carbon legacy (covering all our operational emissions before we became carbon neutral in 2007) through the purchase of high-quality carbon offsets. This means that Google's lifetime net carbon footprint is now zero. We’re pleased to be the first major company to get this done, today.

The company is also committing to running on 100% carbon-free energy everywhere it operates by 2030.

We’ll do things like pairing wind and solar power sources together, and increasing our use of battery storage. And we’re working on ways to apply AI to optimize our electricity demand and forecasting. These efforts will help create 12,000 jobs by 2025.

The Arts

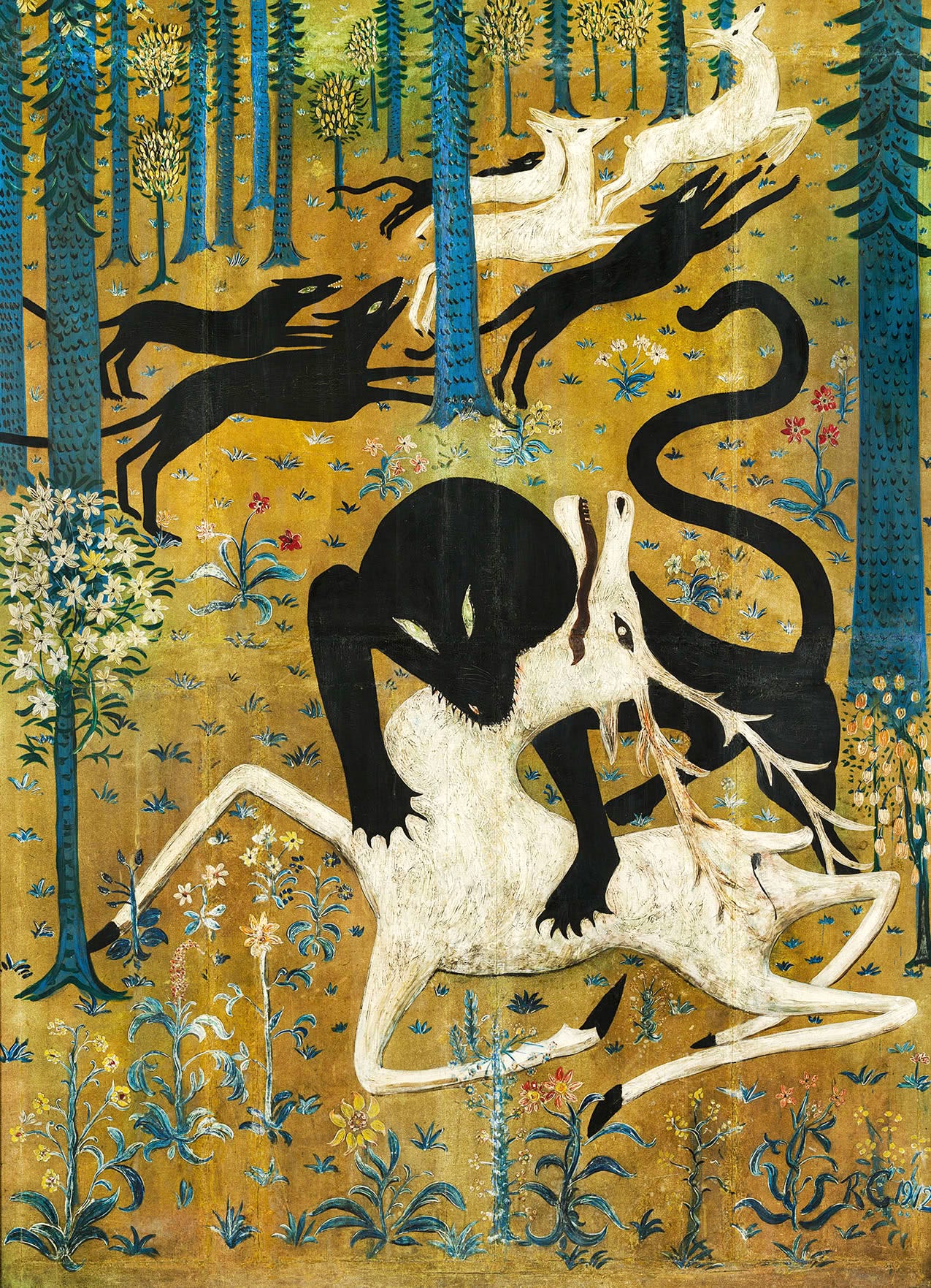

‘Leopard and Deer’ (1912)

I’ve always liked this painting by Robert Winthrop Chanler (1872 — 1930).

Chanler worked with a limited range of subject matter—mostly animals—to create allegorical fantasies in a naïve, spontaneous style. While he pulled his imagery from nature, his concern was not faithful representation but capturing the spirit of nature and of life in an emotional way. Drawing inspiration from ancient Egypt, the Far East, and the Italian Renaissance, he used rich pigments, metallic overlays, and glazes to enhance the symbolic potential of his subjects. (Source)

Ron Howard Conversation by Jim O’Shaughnessy

I almost wrote “interview”, but this is really more of a conversation between two friends, which is even better. The show notes break it down this way:

The pressure and thrill of directing a Beatles documentary

How the movie industry has evolved, and the impact of COVID-19

How documentaries differ from traditional movie making

The future of entertainment and how streaming changes things